|

All 5 books, Edward Tufte paperback $180

All 5 clothbound books, autographed by ET $280

Visual Display of Quantitative Information

Envisioning Information

Visual Explanations

Beautiful Evidence

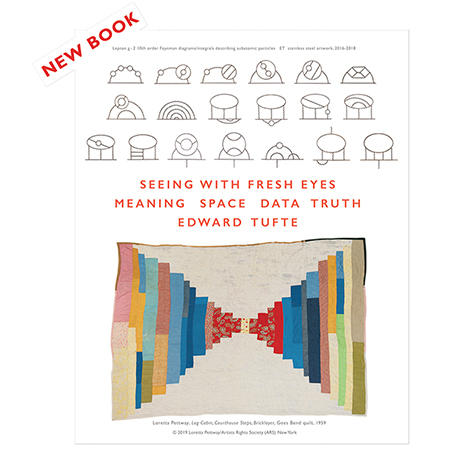

Seeing With Fresh Eyes

catalog + shopping cart

|

Edward Tufte e-books Immediate download to any computer: Visual and Statistical Thinking $5

The Cognitive Style of Powerpoint $5

Seeing Around + Feynman Diagrams $5

Data Analysis for Politics and Policy $9

catalog + shopping cart

New ET Book

Seeing with Fresh Eyes:

catalog + shopping cart

Meaning, Space, Data, Truth |

Analyzing/Presenting Data/Information All 5 books + 4-hour ET online video course, keyed to the 5 books. |

What is the reason, if known, that time on the computer seems to pass so quickly: that computer time seems to be different and "faster" than "normal" time in other settings? If this hasn't been studies and reported, what are contributors' speculations? J. D. Mccubbin

-- J.D. McCubbin (email)

Can we put this into a more general class of problems? Such as failure to scan airplane instruments because of too much time spent focusing on one reading? The cell-phone trance impairment while driving? The apparent rapid passage of time while SCUBA diving? The slowing of time while standing in line?

Maybe there is a literature on apparent time and our internal clocks.

For the computer there is an apparent intense stimulus-response pace that is faster than normal.

I'll bet George Miller, the Princeton psychologist, could tell us.

-- Edward Tufte

Thank you for your response and suggestion to generalize. The first two examples are the "tunnel vision," aka lack of situational awareness, problem(s)well known to aircraft safety people. I have not experienced the (rapid) passage of "outside" time while scuba diving; I have experienced the expansion of available time while learning to juggle. Your example of "slow" waiting time is certainly real. "Apparent time" has a separate meaning outside this question. Maybe "subjective time" would be useful instead. "Time-gap experience" seems to apply, too, especially to computer/web use. And "time" and "hard time," i.e. jail and prison time, are certainly reported to go v-e-r-y slowly. Your reply suggests the "write gun" (raster scan rate?) influences the "user's" perception of time. I know pilots/weapons officers report a distortion of time during combat/trainig/simulator session.

So perhaps my general question might be better stated: what influences one's "subjective time," and in which way -- speeding or slowing -- viz-a-viz societal/clock time; especially in regards to computer/computing influences? And "why?" and "how?" Maybe its as simple as the visual and mental engagement makes one less aware of the passage of "outside" time. Or maybe it is as subtle as one's subsconscious perception of the scan rate.

Thank you. J. D. McCubbin

-- J.D. McCubbin (email)

One theory I have seen about time dragging and flying is that there is no "internal clock" within our brains and we judge the pssage of time via external events.

This means that time passes much more quickly when things are happening than when nothing is happening and we are bored.

When using a computer, things tend to happen in punctuated jumps. There is rarely an instananeous response and flow of events - especially in a GUI-fied system which runs using an event-driven approach. Now if these delays are of sufficient length they become stretches of boredom and frustration and may, at first, seem to make the computer time go more slowly. I have seen studies suggest that this length of time is typically 7 seconds (incidentally the same length of time a rail crossing barrier needs to be down for with no apparent activity before a driver will attempt to drive around it).

If these pauses are long enough and frequent, then there is an obvious, conscious wasting of time. However, many shorter lapses are less noticeable as the brain is distracted by other things - controlling the computer, reading the interface, understanding what's going on, anticipation, etc., etc.

However, the brain judges time by the activity the user manages to complete, the actual stimulating (as opposed to mind wandering) events. As these are actually separated by the periods of "dead time" the brain assumes that less time has passed than actually has passed. This "dead time" will probably be within the user's patience limits (e.g. the roughly 7 seconds mentioned above).

The above is merely summised from my own experience and based on little knowledge (and is therefore a dangerous thing). I don't expect it hold water, but put it forward as I would learn more from it being taken apart than from holding back.

-- Adam

Wouldn't Einstein's well-known response to the question, "What is relativity?" apply here? As you may know, he replied, "Two minutes sitting next to a pretty girl can seem like two seconds; two minutes on a hot stove can seem like 2 hours. That is relativity."

I doubt our ancestors' perception of time was much different than ours: the country cousin would say a day on the farm could feel a week long, the city cousin would say the pace of the city could make a day seem like very few hours. Whether it's 2003 or 1803, it's all relative.

-- Peter Pehrson (email)

I have migrated through various iterations of desktop tax preparation software packages (also various iterations of hardware on which the software runs.) The increase in speed (primarily driven by the hardware) in calculating and displaying a graphical facsimile of a tax form increases efficiency more than the literal reduction in time (my anecdotal observation.) I think this is because the quicker the visual response the less time for the brain to move to some other function (even if it is day dreaming.) So the brain stays engaged with the matter at hand rather than having to re-engage after it dis-engages from having to wait.

-- Gene Prescott (email)

Two books have interesting insight on this very question:

Flow -- Mihaly Csikszentmihalyi From Amazon.com's review: "In work, sport, conversation or hobby, you have experienced, yourself, the suspension of time, the freedom of complete absorption in activity. This is "flow,"...

and

The User Illusion: Cutting Consciousness Down to Size -- Tor Nrretranders, et al Time delays at the millisecond distort consciousness and the experience of the passage of time, as I recall.

I think time and our experience of "it" is a fascinating part of any design work. Thanks for positing the question.

Tracy Puett

-- Tracy Puett (email)

I've recently completed a study of the flow experiences of Web users (Pace 2004). One of the most common dimensions of the flow experience described by the study's informants was a distorted sense of time. In each case, informants reported that time seemed to pass much faster than usual during flow.

The informants' variable experience of the passage of time is what Damasio (2002, p. 50) calls 'mind time'. He contrasts this with 'body time' or the biological clock in the brain's hypothalamus that regulates processes within the body. Mind time can seem fast or slow, short or long, and 'this variability can happen on different scales, from decades, seasons, weeks and hours, down to the tiniest intervals of music'.

Some of the informants in my study pointed to attention as the key factor affecting the variability of their time judgements. For example, one informant said, 'When you're interested in something, it keeps your attention. And when your attention is on one thing, you're not thinking of time'.

Brown and Boltz (2002, p. 600) agree that 'attention is one of the most important psychological processes that regulate the experience of time'. Our experience of time changes with the amount of attention that we direct towards it. When one is attentive to time, as in the case of waiting for a boring lecture to finish, time seems to pass relatively slowly. In contrast, when one is inattentive to time, as in the case of being absorbed in some activity, time seems to pass relatively quickly.

These common observations about the relationship between time and attention have been confirmed in controlled experiments conducted by various researchers. Brown and Boltz (2002) and Grondin (2001) describe a dual-task strategy that has been employed in some experiments. Individuals are asked to perform a temporal task and a non-temporal task simultaneously. The temporal task involves keeping track of time for an upcoming time estimate. The non-temporal task acts as a distraction. Many of these dual-task experiments show what is known as the interference effect. The non-temporal distracter task disrupts the temporal task, making time judgements shorter, more inaccurate or more variable in comparison with a single task control. Many researchers interpret the interference effect in terms of the limited-capacity model of attention. Both tasks compete for a common pool of mental resources, with the result that timing receives a sub-optimal level of attention.

References

Brown, S. W. & Boltz, M. G. 2002, 'Attentional processes in time perception: Effects of mental workload and event structure', Journal of Experimental Psychology: Human Perception and Performance, vol. 28, no. 3, pp. 600-615.

Damasio, A. R. 2002, 'Remembering when', Scientific American, vol. 287, no. 3, pp. 48-55.

Grondin, S. 2001, 'From physical time to the first and second moments of psychological time', Psychological Bulletin, vol. 127, no. 1, pp. 22-44.

Pace, S. 2004, 'A grounded theory of the flow experiences of Web users', International Journal of Human-Computer Studies, vol. 60, no. 3, pp. 327-363.

-- Steven Pace (email)

Two other interesting time distortions are:

1. "Bullet Time" (thanks to the matrix for it's new name) or slow motion. This is usually experienced in an emergency situation, like an automobile accident.

2. "Satori" The feeling of perfect execution of an activity in time and space. Usually experienced in martial arts or artistic performances (my definition).

In the rare instances that I've experienced these distortions the events had me completely immersed. Has anyone experienced these types of abnormalities?

Sean

-- Sean Gerety (email)

I think any good music should present some glimmer of both Sean.

AS requested in the original post, here is my speculation.

Everyone enjoys different music. If the music is compatible, near note prediction by the listener seems to able to occur even when the listener has never heard the track before. The rhythms found in music are probably matching closely the natural thought rhythms of the listeners. For example we hear a teenager's catastrophic punk, a young professional's city paced techno, or an intellectual's classical.

I think everyone has an interest in listening to the music of one's younger years. This probably has more to do with finding and re-experiencing the rhythm of your brain when you first heard them. The rhythm seems to be an attribute recorded with emotion. If you can find just the right rhythm, it can help you re-experience the emotion. I would even speculate that deja vu could be blamed on unexpected rhythm of events.

The interest of experiencing bullet time in the movies probably is rooted in the emotional experience of a real bullet time as you describe. The hyper awareness of panic draws millions to extreme sports every year. I think people often describe bullet time as simply a rush. When speaking of perceiving time, decribing over stimulation and hyper awareness of events as a rush makes more sense.

But back to computers and the original question. Computers are very modular things. We can change the way they react to our input, change what they do, change how they do particualr things and ultimately work in our own rhythm. I think anyone who has tried to teach a computer task to another person finds it nearly unbearable to watch someone else move the mouse differently, not use certain keyboard shortcuts, find buttons, or close a program in some way you do not. The reaction is to want to say "move", sit down and complete the task the way you would get it done.

Switching yourself to a new and different operating system is such a break from the rhythm we painstakingly developed over years of use, we tend to avoid change at all costs. People even mock other operating systems, declaring anything but their own choice unfit for use. Any Mac, Linux or Windows zealot will eagerly participate in such activity.

Since computers are capable of coalescing with one's natural work rhythm, I don't find it surprising that time passes quickly for experienced users. In fact, if time is passing quickly, maybe the designers of the operating system your using actually did something right for once.

At that point, you might be able to comprehend how some computer geeks could find a "rush" from hacking.

-- Jeffrey Berg (email)

|

||||||