|

All 5 books, Edward Tufte paperback $180

All 5 clothbound books, autographed by ET $280

Visual Display of Quantitative Information

Envisioning Information

Visual Explanations

Beautiful Evidence

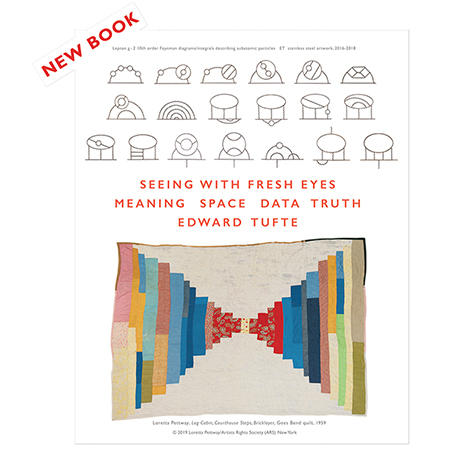

Seeing With Fresh Eyes

catalog + shopping cart

|

Edward Tufte e-books Immediate download to any computer: Visual and Statistical Thinking $5

The Cognitive Style of Powerpoint $5

Seeing Around + Feynman Diagrams $5

Data Analysis for Politics and Policy $9

catalog + shopping cart

New ET Book

Seeing with Fresh Eyes:

catalog + shopping cart

Meaning, Space, Data, Truth |

Analyzing/Presenting Data/Information All 5 books + 4-hour ET online video course, keyed to the 5 books. |

What suggestions do you have for reporting public school standardized and other test data to principals, teachers, our school board, and the public in table and graph format?

-- Stephen Miller (email)

The reporting of school standardized test data is a classic in bad data presentation. Some of the common errors:

- Not explaining what the numbers actually mean (what is the scale from/to, what does a number represent, etc.)

- Presentation of numbers without measurement errors/standard errors

- Presenting rank orders (especially in international comparisons) without the quantified scales

-- Sherman Dorn (email)

Thanks to No Child Left Behind and similar state laws we educators are busy presenting a lot of data to various audiences. For public presentations, I use the percentage of students who "met the standard," a concept embedded in our state testing. For the general public and the Board of Education I use bar graphs showing the percentage for various schools and subgroups over a period of years. These are usually on PowerPoint (our Board President isn't happy unless he sees dancing graphs projected on the wall), but I supplement the graphs with handouts in table format. A lot of information is available on our state website, www.doe.state.de.us Everyone has access to this, so I often simplify the data to make specific points in a presentation.

This whole area can be problematic because you may have to present data based on measurement concepts--percentiles, for example-- that may not be familiar to the general public or even to many teachers.

Whether we agree with the testing emphasis or not, we still have to give people the information.

I'd appreciate any tips anyone has on presenting the "cell" information required in NCLB to the general public without over-simplifying it to a point of uselessness.

-- Karen Foster (email)

Use a supertable, like the NYTimes/CBS Poll table in chapter 9 of The Visual Display of Quantitative Information.

Also take a look at the sports and financial pages of a good newspaper for the design of tables that report performances. Those tables should give you an idea of the intensities with which performance data are routinely reported.

-- Edward Tufte

The Department of Education in the State of New Hampshire has spent $871K on a 5-year contract with the company Performance Pathways for a web-based product that provides the public schools and districts across the state with access the scores of the annual New England Common Assessment Program (NECAP) for all students across the state, the data which determines whether schools have met the criteria for Annual Yearly Progress (AYP) and whether they are designated as a School in Need of Improvement (SINI), and by extension, if the district is designated as a District in Need of Improvement (DINI). Ultimately, schools and districts who cannot get out of the SINI or DINI status after a set number of years end up under the control of the state's Department of Education.

The students' scores on this exam have no bearing on their grades or promotion from one grade to the next, primarily because the test of third grade material, for instance, is not given until October of the fourth grade. The students have nothing at stake in the test, which calls the data into question from the start, so although I have concerns about the ability of any single tool of measurement -- never mind this particular multiple- guess one -- as the criteria for the potential of a high-stakes response by the government, I am having an issue with the quality of the charts produced from this data by the "Performance Tracker" system. I do feel that if one understands what the test is actually measuring, the data itself has value to the classroom teacher.

Some of the issues I have begun pointing out are:

1) Only vertical bar charts and pie charts are available, which are not always appropriate to the data being analyzed to show comparisons properly. There are no stacked-bar, scatter plot, horizontal bar charts, or line charts. 2) Units and scales of measurement in the graphs are not indicated properly and consistently. 3) The charts and tables do not have accurate, descriptive titles, and are often missing altogether. 4) Axes are not always labeled, or when labeled, are not always labeled properly. 5) Variable types are not consistently placed on the conventional axes. 6) Despite the fact that we currently have three years of NECAP testing data, I could not find an option to produce line graph, the standard graph for displaying change over time. 7) The coloring used is often meaningless and confusing -- and inexplicably the same color is sometimes used twice in the same graph to represent two different things. Between charts containing similar information, different color schemes are used, making comparisons of the charts impossible.

I have expressed my concerns to staff at the Department of Education, emphasizing that one of the areas the students are being tested on in the methematics portion of the NECAP itself is "Data, Statistics and Probability" and that the graphics being produced by the reporting system do not meet the standards elementary school students are being held to in the data. I have pointed out that despite all my objections to the federal No Child Left Behind laws, the language of it stipulates the importance of "scientifically-based methods" and that these poor graphics do not meet that standard of the law. I have told them that teachers and administrators are being told repeatedly that they must do "data-driven decision-making" to improve their schools. I have reminded them that "data literacy" is a 21st-century requirement in education.

Nevertheless, the replies from the bureaucrats at the NH State Department of Education, unsurprisingly, have been to defend the product:

1) Lots of other school districts in and out of state have bought this product 2) It was selected after extensive review by a lot of people after comparing it to other products 3) The company has been around for ten years 4) Many people have been working with it successfully 5) We didn't used to have anything to look at the data, so this is better than nothing 6) Lots of other people tell them that they like it

And the best one:

7) It's just your opinion

When faced by this high-stakes situation -- millions of dollars being spent, the shaping of the education of the next generation, and the necessity of competing in a global economy that is increasingly information-based -- I am taking my role as a consumer of these graphics very seriously.

It is possible to log into the Performance Tracker system and access demo data at the following URL:

Username: trainer Password: train

I don't think that "fixing" this product will make a fundamentally bad law better, but I needed to share how appalled I am by the low quality and high price tag for data-management products being pitched as tools to help. Refusal by government bureaucrats to hold these companies to the same quality standards by which they are judging the performance of students and schools is reprehensible.

-- Margo Burns (email)

I enjoyed thoroughly the April 2011 class Dr Tufte offered in Dallas . I repeated this question in person, and obtained the same suggestion. It is somewhat surprising that, although test data has accumulated over the years since NCLB began, our thinking, and presentation methods, regarding test data have not improved. I'd like to restart the discussion and see if inspiration strikes.

The Dallas Morning News does, in fact, run annual features on Texas test scores, putting data into supertables in much the way Dr Tufte suggests.

http://www.dallasnews.com/sharedcontent/dws/graphics/0810/taks/

http://www.dallasnews.com/sharedcontent/dws/graphics/0810/taks/ntex/ntexElem.html Maps are also available: www.dallasnews.com/sharedcontent/dws/graphics/0710/schoolratings/index.html

The online version of the map is interactive, and the single-value indicator for city-wide school performance is available, for each city, over a five year history. Or zoom in to all campuses in the city http://www.dallasnews.com/sharedcontent/dws/graphics/0710/schoolratings/index.html ?isd= [city name]

The single value grade on a four point scale runs unacceptable, acceptable, recognized, and exemplary. (D,C,B, and A -- failure is not an option) But so much detailed information is concealed behind that grade that the grade itself is almost useless. And it seems to "inflate" over time.

Each test sets two benchmarks, for "passing" and "commendable" performance. Here again, some indicators are considered critical while others offer little more than bragging rights. Seeing that at least 70% of students tested pass reading is critical. Having the majority (51%) of those passing, pass at the "commended level", is brag-worthy. www.dallasnews.com/sharedcontent/dws/graphics/0810/taks/ntex/schools/lancasterelem.html

-- Jeff Melcher (email)

|

||||||